What is a Model?

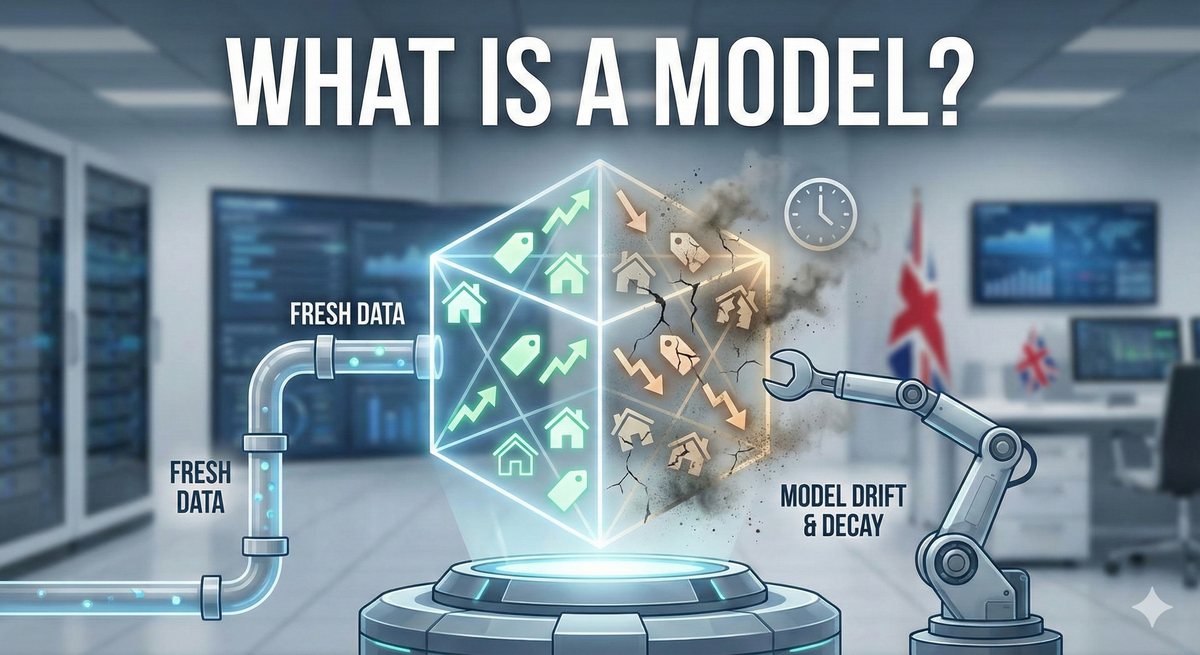

AI models are snapshots of learned patterns that degrade over time. Learn why your AVM or lead scorer needs regular retraining, how to spot model drift, and questions to ask about when vendors last updated their models. Understand the model lifecycle every estate agent should know.

In plain English: In AI, a model is what you get after a machine learning algorithm has finished learning from data. It’s the learned pattern that the system uses to make predictions or decisions. Think of it as the “trained brain” of an AI system—it contains all the knowledge gained from analyzing thousands or millions of examples.

Why It Matters to Estate Agents

Every AI tool you use relies on a model. When you use:

- An AVM (Automated Valuation Model) - That’s literally a model that learned property pricing patterns

- A lead scoring system - It’s using a model trained on your past enquiries

- A property description generator - It’s using a large language model like GPT-4

- An image recognition tool - It’s using a computer vision model

Understanding models helps you ask the right questions:

- “When was this model last updated?”

- “What data was used to train this model?”

- “How do you know this model still works accurately?”

Here’s the critical insight: Models are snapshots of knowledge from a specific point in time. A model trained on 2019 property data won’t work well in 2024. Markets change. Buyer behavior evolves. Models must be retrained regularly or they degrade.

This is why “one-time setup” for AI tools should be a red flag. Good AI requires ongoing model updates as new data becomes available.

Real-World Example: Lead Scoring Models

Let’s follow the lifecycle of a lead scoring model for your agency:

Step 1: Training the Model (Month 0)

Your CRM analyzes 5,000 past enquiries from 2023:

- 500 resulted in completed sales

- 1,500 scheduled viewings but didn’t buy

- 3,000 never responded after initial contact

The machine learning algorithm finds patterns:

- Enquiries with mobile numbers convert 3x better than email-only

- Buyers mentioning specific streets convert 4x better than generic area enquiries

- Enquiries received Tuesday-Thursday convert better than weekend enquiries

- Questions about schools predict 85% viewing attendance

These patterns get encoded into a model—a mathematical representation of “what predicts a serious buyer.”

Step 2: Using the Model (Months 1-6)

New enquiry arrives: “Interested in viewing properties on Beech Road, ideally 3-bed, good schools nearby. Mobile: 07XXX. Available Tues/Wed.”

The model analyzes this:

- ✓ Specific street mentioned (strong signal)

- ✓ Mobile number provided (strong signal)

- ✓ School mention (strong signal)

- ✓ Weekday availability (moderate signal)

Score: 89/100 - HOT LEAD

Your team prioritizes this enquiry. They’re right—viewing scheduled within 2 hours, offer made within a week.

Step 3: Model Degradation (Months 7-12)

Market conditions change:

- A new major employer opens, changing buyer demographics

- Stamp duty holiday ends, affecting urgency patterns

- Property portal changes their enquiry form format

- Your agency expands into a new area with different buyer behavior

The model, trained on 2023 data, starts making poorer predictions. It’s scoring leads 89/100 that never convert. It’s rating genuinely hot leads as 45/100 because they don’t match old patterns.

Your model has drifted. It’s still using 2023 patterns in a 2024 market.

Step 4: Retraining the Model (Month 13)

You feed the algorithm 3,000 new enquiries from the past 6 months. It discovers:

- Mobile vs. email-only is no longer predictive (everyone uses mobile now)

- Enquiries mentioning “remote working” are the new strong signal

- Weekend enquiries now convert better than weekday (work patterns changed)

- Budget mentions became more predictive after stamp duty changes

A new model is created, encoding these updated patterns. Old model is retired. Performance improves immediately.

This is why models need regular updates.

Common Misconceptions

“Once trained, models work forever.” No. Models encode patterns from their training data. When reality changes, models become less accurate. This is called “model drift” and it’s inevitable. Good AI vendors retrain models regularly (monthly or quarterly).

“Bigger models are always better.” Not true. A massive model trained on generic data often performs worse than a smaller model trained on data specific to your market. A model trained on Greater Manchester properties will outperform a giant model trained on all UK properties when valuing Manchester homes.

“You need a new model for every property.” No. One model learns general patterns that apply across properties. You don’t need a separate model for 2-beds vs. 3-beds—a good model learns how bedroom count affects value along with everything else.

“Models learn continuously from every use.” Most don’t. They’re static once trained—they apply learned patterns but don’t update themselves with each prediction. Retraining requires deliberate action by the vendor (or you) to incorporate new data.

Questions to Ask Vendors

When evaluating AI tools, always ask about the model:

- “When was your model last retrained?”

- Good answer: “We retrain monthly on rolling 12-month data”

- Warning sign: “We trained it once in 2022”

- Red flag: “I don’t know” or they can’t answer

- “What data was used to train the current model?”

- Good answer: “50,000 UK property sales from the last 18 months, weighted toward Greater Manchester”

- Warning sign: “Comprehensive property data from multiple sources” (vague)

- Red flag: They can’t or won’t specify

- “How do you monitor model performance over time?”

- Good answer: “We track accuracy weekly and retrain when it drops below 85%”

- Warning sign: “We monitor it regularly” (no specific metrics)

- Red flag: “The model performs consistently” (they’re not checking)

- “Does your model work specifically for my market/property type/price range?”

- Good answer: Specific metrics for your segment (e.g., “87% accurate for £250k-£500k terraced properties in Greater Manchester”)

- Warning sign: “It works across all markets” (probably means it’s mediocre everywhere)

- Red flag: “Our algorithm is universally applicable”

- “Can I test your model against my historical data?”

- Good vendors will let you validate the model on your own past transactions

- This reveals whether it actually works for your specific business

Model Size and Performance

You’ll hear vendors brag about model size: “Our model has 175 billion parameters!” Here’s what you need to know:

Large language models (ChatGPT, Claude): Huge models with billions of parameters. Necessary for understanding and generating human language. These power property description generators and sophisticated chatbots.

Specialized property models: Much smaller (thousands or millions of parameters). A lead scoring model or AVM doesn’t need billions of parameters—it needs quality data relevant to your specific task.

Bigger isn’t automatically better:

- Large models are expensive to run (higher per-use costs)

- Large models can “overfit” (memorize noise instead of learning patterns)

- Large models require more data to train properly

- Large models are harder to interpret and debug

For most estate agency applications, a well-trained smaller model on relevant data beats a massive generic model.

The Model Lifecycle Every Estate Agent Should Understand

Stage 1: Training

- Algorithm analyzes historical data

- Discovers patterns that predict outcomes

- Encodes patterns into a model

- Time: Days to weeks

Stage 2: Validation

- Test model on data it hasn’t seen

- Measure accuracy, identify weaknesses

- Adjust if needed

- Time: Days

Stage 3: Deployment

- Model goes live in your tools

- Makes predictions on real enquiries/properties

- Performance should match validation testing

- Time: Ongoing

Stage 4: Monitoring

- Track accuracy over time

- Watch for model drift as markets change

- Identify when retraining is needed

- Time: Continuous

Stage 5: Retraining

- Feed algorithm new data

- Create updated model

- Replace old model with new one

- Time: Monthly to quarterly (depending on market volatility)

Good AI vendors manage this entire cycle. Poor vendors deploy once and forget.

When Models Go Stale

Signs your AI tool’s model needs retraining:

Accuracy decline: Lead scores that used to be 85% predictive are now 65% predictive

Systematic errors: The AVM consistently undervalues properties with home offices (because WFH wasn’t a factor when it was trained)

Missed opportunities: Hot leads scored as cold; undervalued properties that sell quickly

Outdated assumptions: The model thinks buyers mentioning schools always want large gardens (true in 2019, false after pandemic-driven work-from-home shifts)

Market shifts: Your area gentrified, a major employer arrived/left, transport links changed—but the model doesn’t know

If your vendor can’t tell you when they last retrained their model, assume it’s stale.

The Bottom Line

A model is AI’s “memory” of what it learned—patterns encoded from training data. Models aren’t magic; they’re mathematical representations of patterns found in past data.

Good models:

- Are trained on relevant, recent data

- Are retrained regularly as markets change

- Have measurable accuracy you can verify

- Work specifically for your use case

Bad models:

- Are trained once and forgotten

- Use irrelevant or outdated data

- Make vague accuracy claims

- Are presented as universal solutions

When a vendor says “Our AI uses a sophisticated model,” your immediate questions should be: When was it trained? On what data? When was it last updated? And how do you know it still works?

Models are only as good as their last update.

Related Terms

- Training Data - What models learn from

- Model Drift - When models become less accurate as reality changes

- Retraining - Updating models with fresh data